- Last edited 9 months ago ago

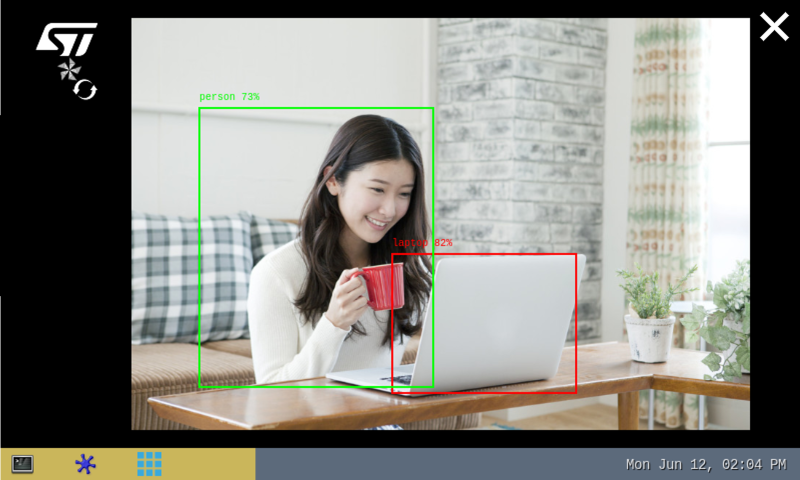

ONNX Python object detection

This article explains how to experiment with ONNX Runtime [1] applications for object detection based on the COCO SSD MobileNet v1 model using ONNX Python™ runtime.

Contents

1 Description[edit]

The object detection[2] neural network model allows the identification and localization of a known object within an image.

The application enables three main features:

- A camera streaming preview implemented using Gstreamer

- An NN inference based on the camera inputs (or test data pictures) run by the ONNX Runtime [1] interpreter

- A user interface implemented using Python GTK

The performance depends on the number of CPUs available. The camera preview is limited to one CPU core while the ONNX Runtime[1] interpreter is configured to use the maximum of the available resources.

The model used with this application is the COCO SSD MobileNet v1 downloaded from the TensorFlow™ For Mobile & Edge[2] as a .tflite model and converted to the ONNX opset 16 format using tf2onnx.

2 Installation[edit]

2.1 Install from the OpenSTLinux AI package repository[edit]

After having configured the AI OpenSTLinux package, the user can install the X-LINUX-AI components for this application:

apt-get install onnx-cv-apps-object-detection-python

Then, the user can restart the demo launcher:

systemctl restart weston-graphical-session.service

2.2 Source code location[edit]

The objdetect_onnx.py Python script is available:

- in the Openembedded OpenSTLinux Distribution with the X-LINUX-AI Expansion Package:

- <Distribution Package installation directory>/layers/meta-st/meta-st-x-linux-ai/recipes-samples/onnxrt-cv-apps/files/object-detection/python/objdetect_onnx.py

- on the target:

- /usr/local/demo-ai/computer-vision/onnx-object-detection/python/objdetect_onnx.py

- on GitHub:

3 How to use the application[edit]

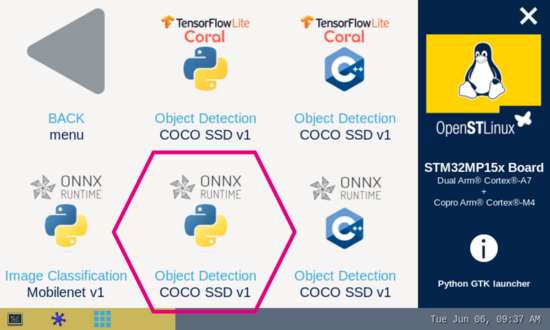

3.1 Launching via the demo launcher[edit]

3.2 Executing with the command line[edit]

The Python script objdetect_onnx.py application is located in the userfs partition:

/usr/local/demo-ai/computer-vision/onnx-object-detection/python/objdetect_onnx.py

It accepts the following input parameters:

usage: objdetect_onnx.py[-h] [-i IMAGE] [-v VIDEO_DEVICE] [--frame_width FRAME_WIDTH] [--frame_height FRAME_HEIGHT] [--framerate FRAMERATE] [-m MODEL_FILE] [-l LABEL_FILE]

[--input_mean INPUT_MEAN] [--input_std INPUT_STD] [--validation] [--num_threads NUM_THREADS]

[--maximum_detection MAXIMUM_DETECTION] [--threshold THRESHOLD]

options:

-h, --help show this help message and exit

-i IMAGE, --image IMAGE

image directory with image to be classified

-v VIDEO_DEVICE, --video_device VIDEO_DEVICE

video device (default /dev/video0)

--frame_width FRAME_WIDTH

width of the camera frame (default is 640)

--frame_height FRAME_HEIGHT

height of the camera frame (default is 480)

--framerate FRAMERATE

framerate of the camera (default is 15fps)

-m MODEL_FILE, --model_file MODEL_FILE

.onnx model to be executed

-l LABEL_FILE, --label_file LABEL_FILE

name of file containing labels

--input_mean INPUT_MEAN

input mean

--input_std INPUT_STD

input standard deviation

--validation enable the validation mode

--num_threads NUM_THREADS

Select the number of threads used by the ONNX interpreter to run inference

--maximum_detection MAXIMUM_DETECTION

Adjust the maximum number of objects detected in a frame according to your NN model (default is 10)

--threshold THRESHOLD

threshold of accuracy above which the boxes are displayed (default 0.62)

3.3 Testing with COCO SSD MobileNet v1[edit]

The model used for test is the detect.onnx downloaded from TensorFlow™ For Mobile & Edge[2] and converted to the ONNX format.

To launch the Python script more easily, two shell scripts are available:

- launch object detection based on camera frame inputs:

/usr/local/demo-ai/computer-vision/onnx-object-detection/python/launch_python_objdetect_onnx_coco_ssd_mobilenet.sh

- launch object detection based on the pictures located in /usr/local/demo-ai/computer-vision/models/coco_ssd_mobilenet/testdata directory:

/usr/local/demo-ai/computer-vision/onnx-object-detection/python/launch_python_objdetect_onnx_coco_ssd_mobilenet_testdata.sh